前言

首先介绍一下本文的学习步骤

- 同步,异步,阻塞和非阻塞

- BIO,NIO模型

- BIO,NIO各种实现以及问题(JAVA)

- NIO与多路复用

- 拓展

最开始的实现永远是最朴素的,而架构演进是跟着需求自然而然进行的。

起因

进程调用系统函数(System Call)会引发中断。

计算机执行逻辑主体是CPU,进程只是一种对CPU工作流抽象的数据结构。在中断发生时,CPU的工作空间从用户内存转换到内核内存并保存当前工作流的上下文信息(现场保护),当系统函数调用完成时需要恢复上下文信息(恢复现场),相对于程序逻辑的执行来说中断的耗时很长。

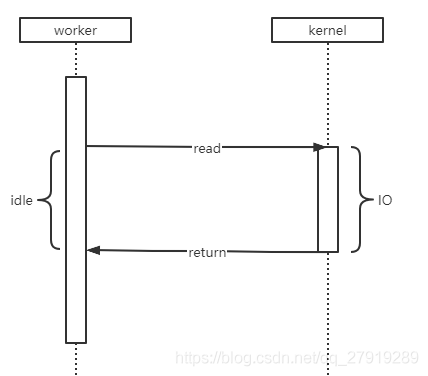

阻塞模型(Blocking)

阻塞模型,系统函数执行完毕后才会返回结果,这段时间请求线程一直是阻塞(Blocking)的。就互联网应用来说,请求打到服务器会产生对应工作线程,当线程进行I/O操作会造成线程处于阻塞状态,造成大量资源浪费。

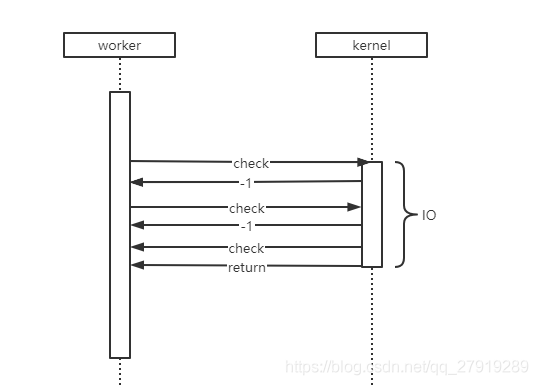

非阻塞模型(Nonblocking)

非阻塞模型,内核函数执行会立即返回当前执行状态。这为在用户态运行的程序提供了优化效率的可能,比如让一个线程负责发起系统调用,另一个线程负责监控函数执行状态,最后再让一个线程池去执行回调后的操作。

同步(Synchronous)和异步(Asynchronous)

同步和异步的争论一直没有停止过,有的人说:“同步就是阻塞,异步就是非阻塞。”,也有人说:“同步是一个线程执行,异步是两个线程执行。”

上面的说法都没有问题,只是看待问题的角度不同,实际上关于这个争论可以引用知乎的一个回答。

同步/异步关注的是消息通信机制 (synchronous communication/ asynchronous communication) 。

- 所谓同步,就是在发出一个调用时,在没有得到结果之前, 该调用就不返回。

- 异步则是相反,调用在发出之后,这个调用就直接返回了,所以没有返回结果。

阻塞/非阻塞关注的是程序在等待调用结果(消息,返回值)时的状态

- 阻塞调用是指调用结果返回之前,当前线程会被挂起。调用线程只有在得到结果之后才会返回。

- 非阻塞调用指在不能立刻得到结果之前,该调用不会阻塞当前线程。

小结

- 阻塞和非阻塞是操作系统级的概念,底层的结构决定了上层的实现。

- 在阻塞模型下就算上层接口立即返回,也并没有解决操作系统资源消耗的问题。

- 非阻塞模型为实现I/O多路复用技术,异步提供了可能性。

BIO、NIO

现在我们再来理解一下BIO、NIO模型可能更为清晰。

| BIO | NIO | |

|---|---|---|

| IO模型 | 阻塞 | 非阻塞 |

| 同步、异步 | 同步 | 支持异步 |

| 优点 | 简单 | 多路复用 |

BIO(Blocking IO)

单线程阻塞IO的实现

publicstaticvoidmain(String[] args)throws IOException{

ServerSocket serverSocket=newServerSocket(8080);

System.out.println("Server Start!");while(true){//发生阻塞1

Socket socket= serverSocket.accept();

System.out.println("Client connect:");byte[] bytes=newbyte[1024];//发生阻塞2

socket.getInputStream().read(bytes);

System.out.println("receive data :"+newString(bytes));}}让我们来看看两次阻塞都发生了什么

accept()

我们在rt.jar下找到了这段代码

InetSocketAddress[] isaa=newInetSocketAddress[1];if(timeout<=0){

newfd=accept0(nativefd, isaa);}看到fd我们基本就知道这是一个系统调用了,因为在linux系统中所有的资源都有对应的fd描述符。新建一个int类型newfd变量,将accept0()的结果赋值给newfd。accept0是个native方法,具体逻辑由jdk源码实现,这边我们不讨论JDK源码直接查看对应的操作系统命令。

$ man accept

NAME

accept- accept a connection on a socket

SYNOPSIS

#include<sys/types.h>/* See NOTES */

#include<sys/socket.h>intaccept(int sockfd, struct sockaddr*addr, socklen_t*addrlen);

#define _GNU_SOURCE

#include<sys/socket.h>intaccept4(int sockfd, struct sockaddr*addr,

socklen_t*addrlen,int flags);

DESCRIPTION

Theaccept() system call is used with connection-based socket types(SOCK_STREAM,

SOCK_SEQPACKET). It extracts the first connection request on the queue of pending

connectionsfor the listening socket, sockfd, creates anewconnected socket, and

returns anewfile descriptor referring to that socket. The newly created socket

is not in the listening state. The original socket sockfd is unaffected bythis

call.

The argument sockfd is a socket that has been created withsocket(2), bound to a

local address withbind(2), and is listeningfor connections after alisten(2).

The argument addr is a pointer to a sockaddr structure. This structure is filled

in with the address of the peer socket, as known to the communications layer. The

exact format of the address returned addr is determined by the socket’s address

family(seesocket(2) and the respective protocol man pages). When addr is NULL,

nothing is filled in; inthiscase, addrlen is not used, and should also be NULL.

RETURN VALUE

On success, these system callsreturn a non-negative integer that is a descriptorfor the accepted socket. On error,-1 is returned, and errno is set appropriately.简单翻译一下,accept是socket的系统调用,只有第一次连接会触发,返回当前socket的文件描述符。

read()

将accept渠道取到的文件描述符作为属性注入,然后socketRead0就完事了

//注入属性SocketInputStream(AbstractPlainSocketImpl impl)throws IOException{super(impl.getFileDescriptor());this.impl= impl;

socket= impl.getSocket();}//调用native方法读取privateintsocketRead(FileDescriptor fd,byte b[],int off,int len,int timeout)throws IOException{returnsocketRead0(fd, b, off, len, timeout);}由于两次阻塞都比较耗时,单线程实现的处理效率很低。

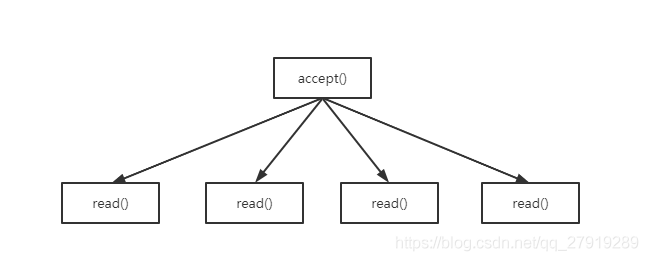

多线程阻塞IO的实现

publicstaticvoidmain(String[] args)throws IOException{

ServerSocket serverSocket=newServerSocket(8080);

System.out.println("Server Start!");while(true){

Socket socket= serverSocket.accept();//开启线程执行newThread(()->{

System.out.println("Client connect:");byte[] bytes=newbyte[1024];try{

socket.getInputStream().read(bytes);}catch(IOException e){

e.printStackTrace();}

System.out.println("receive data :"+newString(bytes));}).start();}}

虽然多线程提高了Server响应速度,但是实际上大部分线程都同时处于阻塞状态。

NIO(Nonblocking IO)

publicstaticvoidmain(String[] args)throws IOException{//启动服务器,设置非阻塞

ServerSocketChannel serverSocket= ServerSocketChannel.open();

serverSocket.bind(newInetSocketAddress(8080));

serverSocket.configureBlocking(false);while(true){//保存连接

SocketChannel socketChannel= serverSocket.accept();if(socketChannel!= null){

socketChannel.configureBlocking(false);

channelList.add(socketChannel);}// 遍历连接

Iterator<SocketChannel> iterator= channelList.iterator();while(iterator.hasNext()){doWork(iterator.next(),channelList);}}}//执行工作privatestaticvoiddoWork(SocketChannel socketChannel,List<SocketChannel> channelList)throws IOException{

ByteBuffer byteBuffer= ByteBuffer.allocate(1024);int read= socketChannel.read(byteBuffer);if(read>0){//do something}elseif(read==-1){//连接已断开

channelList.remove(socketChannel);}}Server服务通过容器保存当前连接,并轮询当前连接通过read()获取当前连接是否有写数据,这样Server就实现了单线程处理并发连接的能力。但是如果连接集合中只有一小部分在传输数据,每次都遍历所有连接显然不是一个高效的策略。

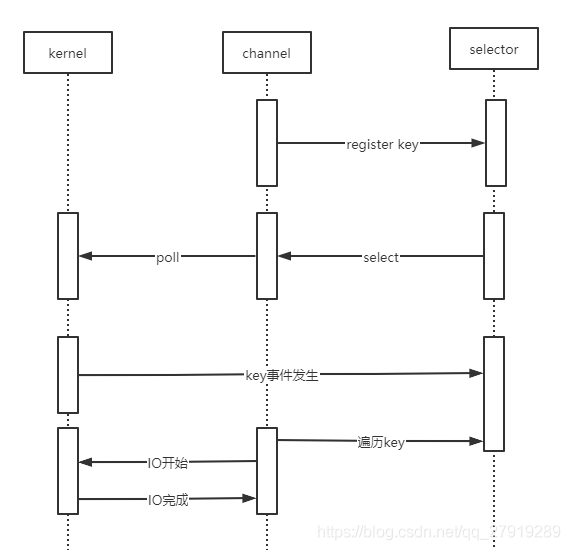

NIO多路复用

操作系统提供了非阻塞模型来支持单线程处理并发请求,同样也提供了多路复用的通信方式来支持优化无效的遍历。

//启动服务器,设置非阻塞

ServerSocketChannel serverSocket= ServerSocketChannel.open();

serverSocket.bind(newInetSocketAddress(8080));

serverSocket.configureBlocking(false);//注册accept事件

Selector selector= Selector.open();

serverSocket.register(selector, SelectionKey.OP_ACCEPT);while(true){

selector.select();//获取所有事件

Set<SelectionKey> selectionKeys= selector.selectedKeys();

Iterator<SelectionKey> iterator= selectionKeys.iterator();//遍历accept时间while(iterator.hasNext()){

SelectionKey key= iterator.next();

iterator.remove();// 如果是OP_ACCEPT事件,则注册读事件if(key.isAcceptable()){

ServerSocketChannel server=(ServerSocketChannel) key.channel();

SocketChannel socketChannel= server.accept();

socketChannel.configureBlocking(false);

socketChannel.register(selector, SelectionKey.OP_READ);}// 如果是OP_READ事件if(key.isReadable()){//do something}}}查看源码我们发现,windows系统NIO的select()方法最终调用的是poll函数

privatenativeintpoll0(long var1,int var3,int[] var4,int[] var5,int[] var6,long var7);继续$man poll查看功能

NAME

poll, ppoll- waitfor some event on a file descriptor

SYNOPSIS

#include<poll.h>intpoll(struct pollfd*fds, nfds_t nfds,int timeout);

#define _GNU_SOURCE

#include<poll.h>intppoll(struct pollfd*fds, nfds_t nfds,const struct timespec*timeout,const sigset_t*sigmask);

DESCRIPTIONpoll() performs a similar task toselect(2): it waitsfor one of a set of file

descriptors to become ready to perform I/O.

The set of file descriptors to be monitored is specified in the fds argument, which is an array of nfds structures of the following form:

struct pollfd{int fd;/* file descriptor */short events;/* requested events */short revents;/* returned events */};

The field fd contains a file descriptorfor an open file.

The field events is an input parameter, a bit mask specifying the events the application is interested in.

The field revents is an output parameter, filled by the kernel with the events that actually occurred. The bits returned in revents can include any of those specified

in events, or one of the values POLLERR, POLLHUP, or POLLNVAL.(These three bits are meaningless in the events field, and will be set in the revents field whenever

the corresponding condition istrue.)

RETURN VALUE

On success, a positive number is returned;this is the number of structures which have non-zero revents fields(in other words, those descriptors with events or

errors reported). A value of0 indicates that the call timed out and no file descriptors were ready. On error,-1 is returned, and errno is set appropriately.

poll监听一个集合中的事件是否准备好进行IO操作,实现原理类似于上面代码中的遍历集合判断状态,只不过是让操作系统来执行轮询操作。

扩展

在linux中的select()源码和windows有着明显的区别

Windows

this.subSelector.poll();Linux

pollWrapper.poll(timeout);pollWrapper封装了epoll函数

执行man epoll

NAME

epoll- I/O event notification facility

SYNOPSIS

#include<sys/epoll.h>

DESCRIPTION

epoll is a variant ofpoll(2) that can be used either as an edge-triggered or a

level-triggeredinterfaceand scales well to large numbers of watched file descrip-

tors. The following system calls are provided to create and manage an epoll

instance:* An epoll instance created byepoll_create(2), which returns a file descriptor

referring to the epoll instance.(The more recentepoll_create1(2)extendsthe

functionality ofepoll_create(2).)* Interest in particular file descriptors is then registered viaepoll_ctl(2).

The set of file descriptors currently registered on an epoll instance is some-

times called an epoll set.* Finally, the actual wait is started byepoll_wait(2).IO事件通知器,epoll是poll的升级版本用来监控文件触发,一般通过epoll_create(创建函数),epoll_ctl(管理函数),epoll_wait(监控函数)配合使用。

epoll与poll的区别可以参考这篇文章《深入理解select、poll和epoll及区别》

总结

IO模型优化并不是提升IO的效率,而是尽量减少无效的IO和系统中断的次数,提高资源利用率。