说明:

Sleuth用于服务调用链追踪,在log里埋点;

Zipkin是一套分布式实时数据追踪系统,可以将Sleuth数据以大盘监控显示出来。

ELK(Elasticsearch + Logstash + Kibana):根据Trace ID搜索所有上下游Log。

一、搭建Zipkin服务端:

地址:http://localhost:9411/

1.方式1(推荐),下载官方包zipkin-server-2.12.9-exec.jar,执行命令启动:

D:\javaee_workspace\SpringCloud-Sleuth\ZipkinServer>java -jar zipkin-server-2.12.9-exec.jar2.方式2,搭建包zipkin工程启动:

(1)添加Zipkin依赖:

<project ...>

...

<dependencies>

<!-- 导入Eureka的Client端依赖包 -->

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-starter-netflix-eureka-client</artifactId>

</dependency>

<!-- 导入zipkin服务端依赖包 -->

<dependency>

<groupId>io.zipkin.java</groupId>

<artifactId>zipkin-server</artifactId>

<version>2.12.9</version>

</dependency>

<dependency>

<groupId>io.zipkin.java</groupId>

<artifactId>zipkin-autoconfigure-ui</artifactId>

<version>2.12.9</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-maven-plugin</artifactId>

<configuration>

<mainClass>com.yyh.zipkinserver.ZipkinServerApplication</mainClass>

</configuration>

<executions>

<execution>

<goals>

<goal>repackage</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>

</project>(2)创建Application类:

@EnableZipkinServer //配置Zipkin服务端

@SpringBootApplication //配置启动类

@EnableDiscoveryClient //注册到注册中心

public class ZipkinServerApplication {

public static void main(String[] args) {

SpringApplication.run(ZipkinServerApplication.class); //加载启动类

}

}(3)创建application.yml:

spring:

application:

name: ZipkinServer

main:

allow-bean-definition-overriding: true

server: #配置此Web微服务端口号

port: 9411

eureka:

client:

service-url: #导入Eureka Server配置的地址

defaultZone: http://localhost:10000/eureka/

management:

metrics:

web:

server:

auto-time-requests: false #不加后台会显示报错日志3.打开浏览器,访问zipkin首页看效果:

http://localhost:9411

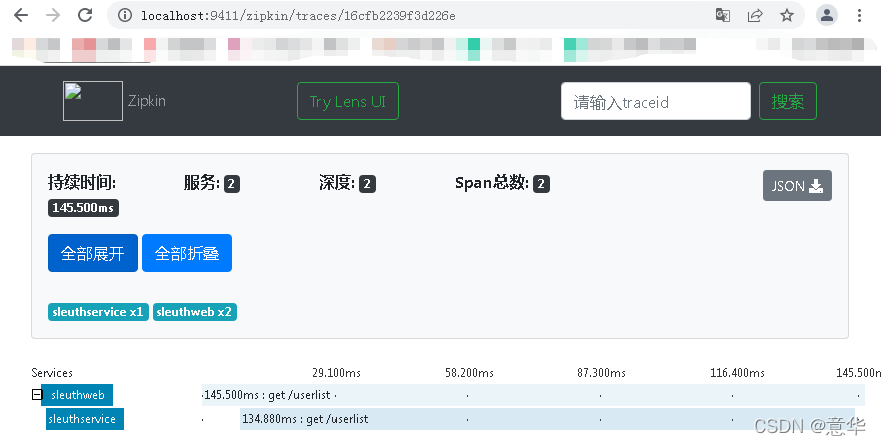

截图如下(截图时已完成《三、Sleuth集成ELK实现日志查看/搜索》):

二、安装ELK服务器:

环境:docker

主机IP:192.168.233.147

1.下载elk镜像:

[root@localhost ~]# docker pull docker.io/sebp/elk2.创建elk容器(myElk为名称):

[root@localhost ~]# docker run -p 5601:5601 -p 9200:9200 -p 5044:5044 -e ES+MIN_MEM=128m -e ES_MAX_MEM=1024 -it --name myElk sebp/elk3.进入myElk容器命令行(/bin/bash为文件所在路径):

[root@localhost ~]# docker exec -it myElk /bin/bash4.修改elk配置文件02-beats-input.conf:

root@78a996377681:/# vim /etc/logstash/conf.d/02-beats-input.conf修改内容为(按Esc键,输入:wq保存退出):

input { //接收端

tcp {

port => 5044 #端口为5044

codec => json_lines #log格式为json

}

}

output { //发送端,将log发送给elasticsearch

elasticsearch {

hosts => ["localhost:9200"] //elasticsearch服务器地址

}

}退出myElk容器命令行:

root@78a996377681:/# exit4.重启elk容器:

[root@localhost ~]# docker restart myElk5.打开浏览器,访问Kibana首页看效果:

http://192.168.233.147:5601

(1)创建index pattern:

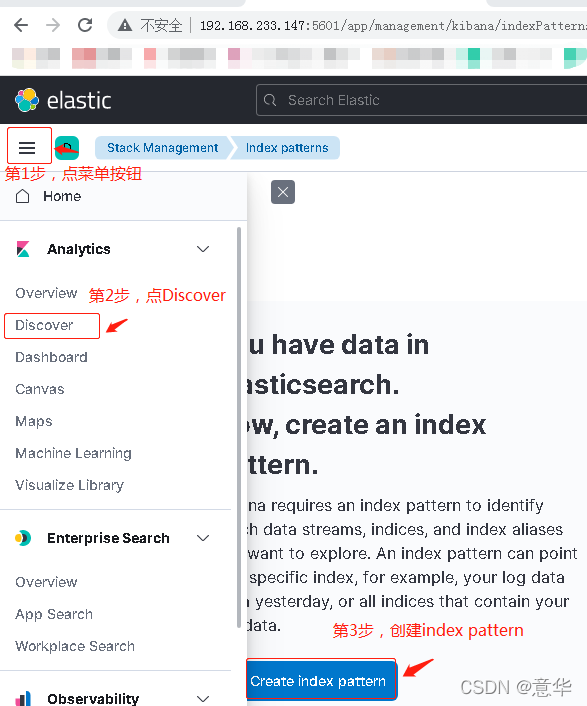

点击左上角菜单图标 -> 左侧列表中选择Discover -> 点击中间"Create index pattern"按钮,如图:

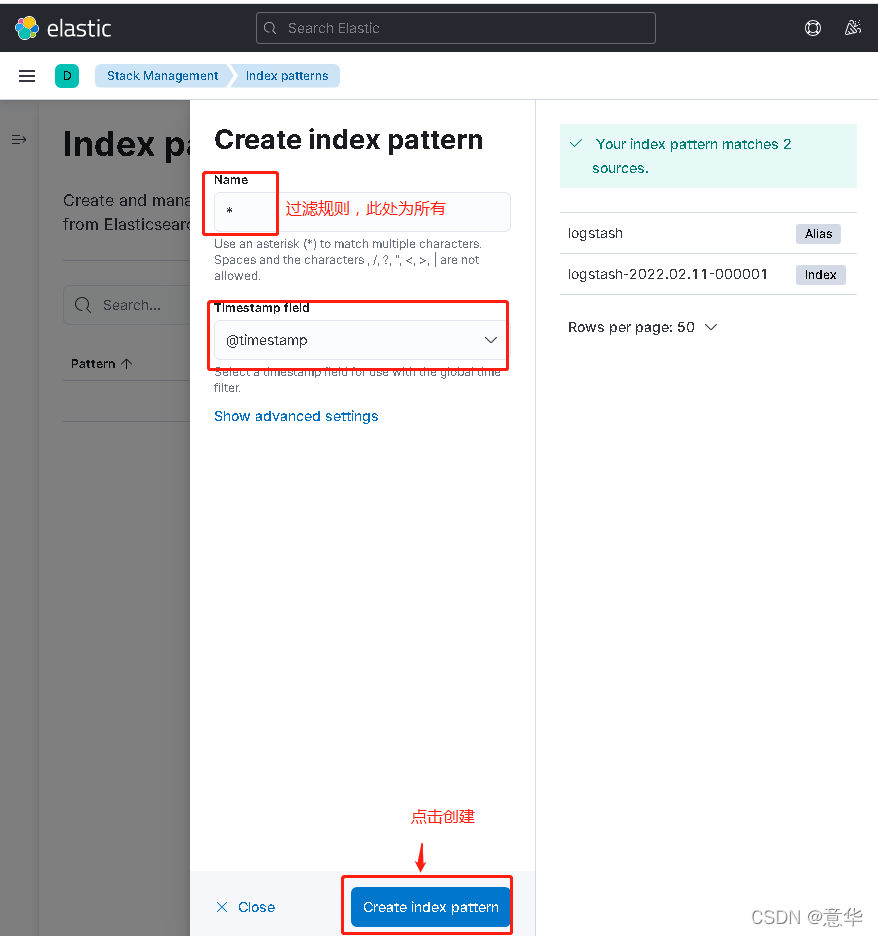

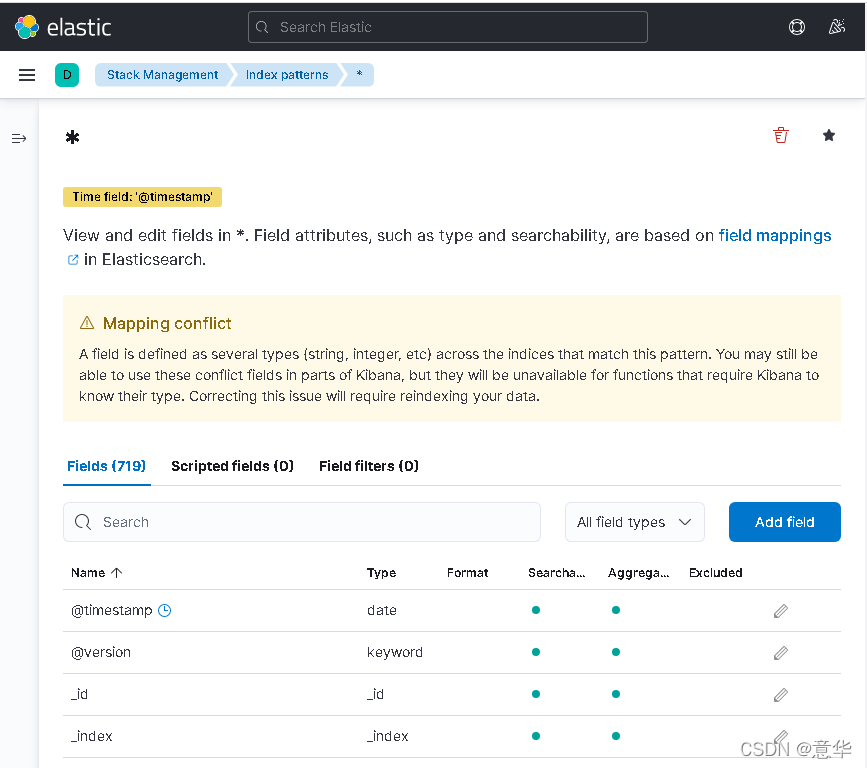

在弹出界面中,Name输入*,Timestamp field选择@timestamp,点击底部"Create index pattern"按钮创建,如图:

(2)可以在Filters中输入pid=4860或直接搜id,搜索指点定log信息,信息可以从控制台找,如:

INFO [,0c4d974f0a4b0ce6,0c4d974f0a4b0ce6,true] 4860

三、Sleuth集成ELK实现日志查看/搜索(微服务与调用服务的工程都需要配置):

1.工程中添加Sleuth+Zipkin客户端+Logstash依赖:

<dependencies>

...

<!-- 导入sleuth依赖包 -->

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-starter-sleuth</artifactId>

</dependency>

<!-- 导入zipkin客户端依赖包 -->

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-starter-zipkin</artifactId>

</dependency>

<!-- 导入Logstash依赖包 -->

<dependency>

<groupId>net.logstash.logback</groupId>

<artifactId>logstash-logback-encoder</artifactId>

<version>7.0</version>

</dependency>

</dependencies>2.配置sleuth+zipkin服务器信息,在application.yml中:

spring:

...

zipkin: #配置zipkin服务端地址,一些属性从ZipkinProperties类中找

#discovery-client-enabled: true #开启服务发现,从注册中心拿zipkin服务端地址时需要开启

base-url: http://localhost:9411/ #zipkin服务端地址

#locator: #可以配置从注册中心拿zipkin服务端地址

#discovery:

#enabled: true

sender:

type: web #使用HTTP方式传送数据(不使用rmq方式)

sleuth:

sampler:

probability: 1 #收集日志采样比,当前100%

logging:

config: classpath:logback-spring.xml #配置logback-spring.xml路径

file: ${spring.application.name}.log #配置log位置

info:

app:

name: sleuthservice

description: test

...3.配置log输出到ELK服务器,在logback-spring.xml中:

<?xml version="1.0" encoding="UTF-8"?>

<configuration>

<!-- 给使用百分号(%)开头的关键字配置转换器 -->

<include resource="org/springframework/boot/logging/logback/defaults.xml"/>

<springProperty scope="context" name="springAppName" source="spring.application.name"/>

<!-- log输出位置 -->

<property name="LOG_FILE" value="${BUILD_FOLDER:-build}/${springAppName}"/>

<!-- log输出格式 -->

<property name="CONSOLE_LOG_PATTERN" value="%clr(%d{yyyy-MM-dd HH:mm:ss.SSS}){faint} %clr(${LOG_LEVEL_PATTERN:-%5p}) %clr(${PID:- }){magenta} %clr(---){faint} %clr([%15.15t]){faint} %m%n${LOG_EXCEPTION_CONVERSION_WORD:-%wEx}}"/>

<!-- log从控制台输出的appender -->

<appender name="console_appender" class="ch.qos.logback.core.ConsoleAppender">

<filter class="ch.qos.logback.classic.filter.ThresholdFilter">

<level>INFO</level>

</filter>

<!-- log输出编码 -->

<encoder>

<pattern>${CONSOLE_LOG_PATTERN}</pattern>

<charset>utf8</charset>

</encoder>

</appender>

<!-- log从logstash输出的appender -->

<appender name="logstash_appender" class="net.logstash.logback.appender.LogstashTcpSocketAppender">

<destination>192.168.233.147:5044</destination> <!-- ELK服务器地址 -->

<!-- log输出编码为json格式 -->

<encoder class="net.logstash.logback.encoder.LoggingEventCompositeJsonEncoder">

<providers>

<timestamp>

<timeZone>UTC</timeZone>

</timestamp>

<pattern>

<pattern><!-- 以下字符串拷贝自网上 -->

{

"severity": "%level",

"service": "${springAppName:-}",

"trace": "%X{X-B3-TraceId:-}",

"span": "%X{X-B3-SpanId:-}",

"exportable": "%X{X-Span-Export:-}",

"pid": "${PID:-}",

"thread": "%thread",

"class": "%logger{40}",

"rest": "%message"

}

</pattern>

</pattern>

</providers>

</encoder>

</appender>

<!-- log输出控制,此处输出到控制台、logstash -->

<root level="INFO">

<appender-ref ref="console_appender"/>

<appender-ref ref="logstash_appender"/>

</root>

</configuration>